The Reason AI Is Not Delivering Is Not the Tools. It's Everything Around Them. And Research Is No Exception.

The tech industry spent over $700 billion on AI so far. A 2026 NBER study then asked 6,000 executives across four countries whether any of it moved the needle. 89% said no productivity boost. 90% said no employment impact. The METR study of experienced developers using the latest AI tools found they completed tasks 19% slower with AI than without it.

Seven hundred billion dollars. Nineteen percent slower.

Sit with that for a second.

[...]

Nobody wants to say the obvious thing so I will say it. The problem is not the AI. The problem is that organizations bought tools and called it transformation. They dropped AI into whatever structure already existed, sent the all-hands announcement, updated the careers page to say "AI-powered," and waited for the numbers to change.

The numbers did not change because the structure did not change. The structure never changes. That is the whole problem and it has always been the whole problem and yet here we are, somehow surprised, again.

A Brief History of Transformation Theater

Let me take you back to agile. Remember agile? The promise was faster delivery, empowered teams, fewer approvals, better software. What happened in most organizations is they renamed their meetings, bought Jira licenses, hired a Scrum Master who looked increasingly haunted as the months went by, and kept doing exactly what they were doing before. Same decisions made by the same people in the same way. Just with more standups and a board that turned red every Friday.

Then design thinking arrived in a cloud of post-its and empathy maps. Innovation labs opened. Exposed brick appeared in offices that previously had normal walls. People started saying "how might we" in meetings where the answer had already been decided. The org chart stayed identical. The VP who ignored user research before design thinking ignored it after. The post-its went up and came down and nothing changed except that everyone owned a Sharpie.

Then lean. Then OKRs. Then digital transformation, which was agile with a larger consulting invoice.

Now AI. Same play. Bigger budget. Much better graphics.

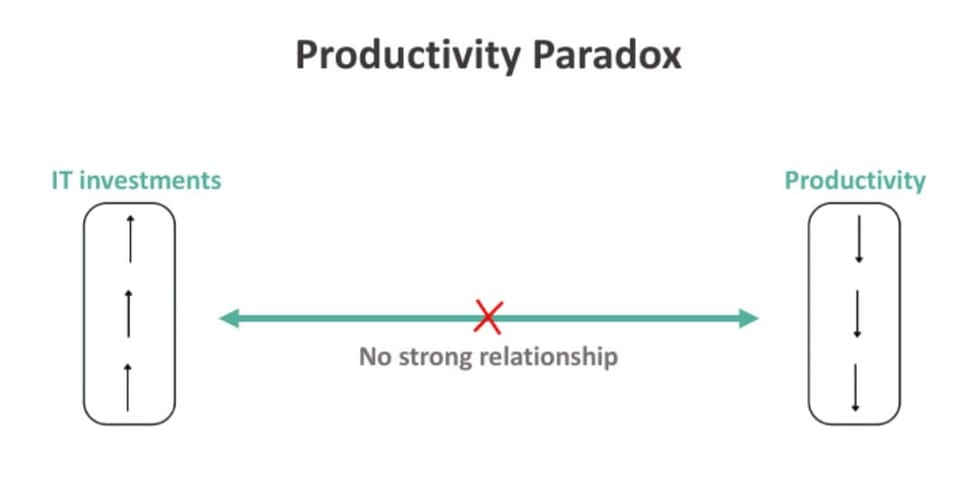

The pattern is so consistent it has its own name. Researchers call it the productivity paradox and economists have been writing about it since the 1980s when computers showed up everywhere except in the productivity data. Apollo's chief economist Torsten Slok recently dusted off the Solow paradox from forty years ago and applied it to AI. "AI is everywhere except in the incoming macroeconomic data," he wrote. "You don't see AI in the employment data, productivity data, or inflation data."

Forty years. Different tool. Same paradox. Same reason.

The reason is always the same. The tool is not the problem. The structure the tool got dropped into is the problem. And nobody ever changes the structure because changing the structure is hard and buying tools is easy and announcing tools is even easier and posting about it on LinkedIn is easiest of all.

What Actually Happened When You Dropped AI Into Your Organization

Here is a precise description of what happened in most organizations that "adopted AI."

Someone senior came back from a conference or read a memo or got scared by a competitor announcement. An email went out. A task force was formed. The task force identified tools. The tools got purchased. A rollout happened. Usage metrics were collected. The metrics showed adoption was up. The deck went to leadership. Leadership was satisfied. The subject was considered closed.

Meanwhile the actual work continued exactly as before, except now there was an extra step where you checked whether the AI output was any good before using it. Which, depending on the task, added anywhere from five minutes to two hours to the process. The 77% of professionals in the CSIRO survey who said AI increased their workload were not failing to use the tools correctly. They were using tools that had been placed inside workflows that were never redesigned to receive them.

You gave someone a faster car and left them in the same traffic jam. The car is not the problem.

In research specifically the failure mode is almost poetic in its consistency. Transcription got automated. Great. Synthesis got faster. Wonderful. Repositories started filling up more quickly. Tremendous. And then the research function continued operating exactly as it always had, answering questions as they came in from whoever asked loudest, running studies on timelines that had nothing to do with when decisions were actually being made, producing decks that got acknowledged in meetings and then quietly ignored.

The service model is still the service model. AI made it faster. A faster service model is still a service model. You are now wrong more efficiently, reactive more quickly, and irrelevant at higher velocity. Congratulations on your Dovetail subscription.

The Noise Machine Is Not Helping

Here is where it gets worse.

While all of this was happening, a parallel industry emerged to explain it. Consultants who learned what AI was from a client engagement started selling... AI Consultancy. Influencers who had never run a research study in a real organization with real constraints started publishing content about AI research strategy. LinkedIn filled up with people explaining the future of UXR who had spent approximately eighteen months in the field before pivoting to thought leadership.

They are not lying exactly. They are describing what the tools can do in ideal conditions, which is genuinely impressive, and extrapolating that into a vision of organizational change that requires none of the hard organizational work to achieve. Buy the tool. Follow the framework. Transform. The framework is usually a two-by-two matrix or a five-step process with alliterative names and enough abstraction that it cannot possibly be wrong.

This noise is not neutral. It actively makes things worse. It gives organizations the vocabulary of transformation without the substance. It gives leaders something to point to when asked what they are doing about AI. It creates the illusion that the problem has been addressed when the address is a hotel and nobody actually lives there.

The field does not need another LinkedIn carousel about AI-powered research. It needs someone to do the unglamorous structural work of figuring out how research organizations actually have to change, at the level of operating model and governance and engagement, to produce real value from these tools. That work does not fit in a carousel. It does not generate the same engagement numbers. It requires actual thought and it will make some people uncomfortable and it cannot be learned in a weekend workshop.

The Term I Hate and the Idea Behind It That Is Actually Right

I need to talk about "AI-native."

I hate this term. I hate it with a specific and well-documented passion. It has the same energy as "digital-native" had in 2014, which is to say it sounds meaningful and says almost nothing. Every vendor whose product works with an API now describes themselves as AI-native. Teams that use ChatGPT to write their meeting summaries describe themselves as AI-native. It is a marketing term cosplaying as an organizational philosophy and I want it to retire immediately.

That said.

The spirit behind it is correct and important and the field needs to take it seriously even while rejecting the branding.

What AI-native is trying to say, underneath the buzzword costume, is that you cannot just add AI to what you already do. You have to rethink what you do from the beginning, with AI as a given rather than an addition. The operating model. The governance. The way work gets structured, prioritized, routed, reviewed, and communicated. All of it. From zero. Not as an upgrade to the existing system but as a replacement for it.

That is a genuinely radical idea. It is also genuinely correct. And almost nobody is actually doing it.

The organizations getting real productivity gains from AI, the ones showing up in the 2024 BCG study and the 2025 P&G study , are not the ones that bought the best tools. They are the ones that redesigned the work around the tools. They started from the question of what they were trying to produce and worked backwards to what the operating model needed to look like to produce it, with AI as a design constraint rather than an afterthought.

That is not a tool decision. That is an organizational design decision. It is harder, slower, less photogenic, and entirely absent from most of the AI transformation content currently circulating on the internet.

What the Field Actually Needs

Not a new tool. Not a carousel. Not a consultant who discovered research eighteen months ago and is now very confident about its future.

The field needs to ask a different question. Not "what AI tool should we adopt" but "what does a research function actually have to look like to produce real value in a world where the tooling has fundamentally changed." Those are not the same question. Most organizations are only asking the first one.

The researchers who figure this out are not going to be the ones with the best stack. They are not going to be the ones who posted the most content about AI transformation. They are going to be the ones who had the discipline to sit with the uncomfortable question before everyone else did.

Because if the people actually doing this work in the field don't build the framework, someone else will. And we all know how that goes. Anyone remember how design thinking landed? The post-its, the two by two matrices, the consultants who had never run a study in their life explaining research to researchers. I'd rather not do that again.

I have been working through exactly this. The operating modes, the routing logic, the governance model, the engagement framework. You can see the thinking developing across a few recent pieces: the case for micro research as a distinct operating mode (here), why AI-enabled teams need structural change not just faster tools (here), and what it actually means to maintain an organizational model of your users rather than just accumulate studies (here). These are pieces of a larger framework I have been working on in private. It will be out soon.

The pattern is forty years old. Different tool, same paradox, same reason. At some point the industry has to decide whether it wants to keep running the same play or actually change something.

The choice is not complicated. It is just hard. And hard does not fit in a carousel.

This post was inspired by Torsten Slok's two-line masterpiece "Waiting for the AI J-Curve" and the Fortune piece that unpacked it. Slok is Apollo's chief economist and somehow said more in two paragraphs than most people say in a twenty-slide deck. The core observation: AI is everywhere except in the macroeconomic data. Sound familiar? It should. Robert Solow said the same thing about computers in 1987. Worth five minutes of your time.

🎯 One essay a week on what research actually requires right now. No transformation theater. Subscribe.