AI-Unmoderated Research is a Modality Now. Stop Arguing About What to Call It. It Doesn't Need Your Approval. It Needs a Framework.

Five people sent me the same link on the same morning. That's either a sign the UXR community has fully become a hive mind, or that something important just happened.

It was the second thing.

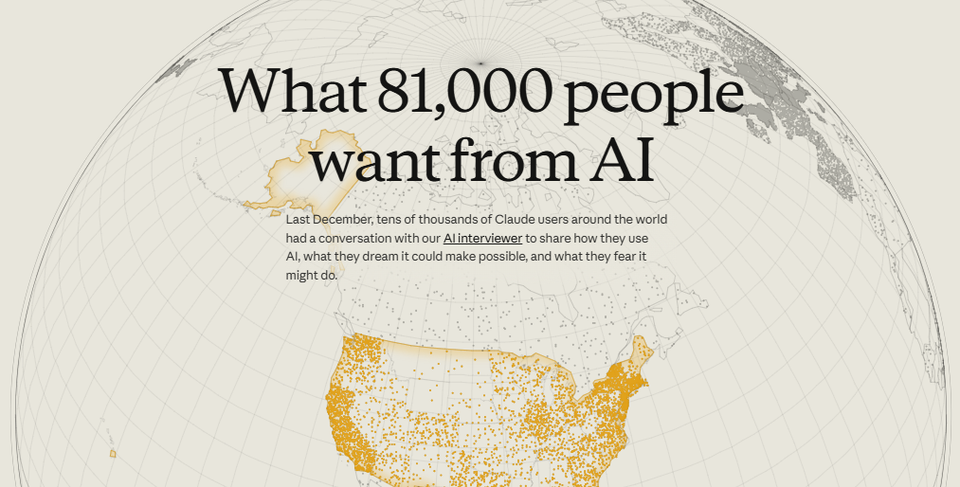

Anthropic published what they're calling the largest qualitative study ever conducted. 80,000 open-ended conversations. One week. 159 countries. 70 languages. Adaptive follow-up. Run entirely by an AI.

The field noticed. And then, predictably, the field did what the field does best.

It started arguing about definitions.

The Debate That Broke Out

Within hours of the study dropping, the field split into two camps and started throwing methodology at each other.

The qual researchers said this wasn't qual. No human moderator means no real probing. Rapport is what gets you honest answers and you can't build rapport with a chatbot. Non-verbal cues are gone entirely. The sample is Claude users who opted in, which is about as representative as surveying people at a tech conference about whether technology is good.

The survey researchers said it wasn't a survey either. The instrument was never psychometrically validated. No reliability testing. No replication framework. No way to know if you'd get the same results twice. Open-ended responses with no standardized scoring isn't survey methodology, it's just a lot of text.

Here's the best part. Both camps agreed on exactly one thing: whatever this was, it wasn't theirs. Anthropic ran the largest conversational data collection in human history and the field responded by trying to return it like an Amazon package delivered to the wrong address.

Nobody wanted to claim it. Which is exactly how you know it's something new.

Both Camps Are Right. About the Wrong Thing.

Here's where I land. Both sides identified real weaknesses. I agree with most of their criticisms. But they made the same mistake: they blamed the modality when they should have blamed the absence of a framework around it.

The weaknesses they're pointing to are not inherent flaws in AI-unmoderated conversational data collection as a method. They're what happens when you run any modality without the proper structure around it. A human-moderated study run without a proper discussion guide, a clear research question, and a rigorous analysis protocol produces garbage too. We just don't blame the modality when that happens. We blame the researcher.

AI-unmoderated conversational research is neither qual nor survey. It's a new modality. New modalities don't fit cleanly into old taxonomies, which is what makes them useful and what makes the field uncomfortable about them.

This modality has genuine shortcomings on both sides. Compared to human-moderated research, you lose the non-verbal cues, the tangents worth chasing, the moment where a participant says something offhand and the researcher leans forward because that's the real insight. You lose the relational dynamic that sometimes unlocks the most honest answers. You lose real-time judgment in the room.

But you also lose what surveys do well. There's no psychometric validation. No standardized scoring that lets you run inferential statistics or benchmark against previous waves. No clean quantifiable output you can drop into a dashboard. No representative sampling framework. You can't say "27% of users feel X" and defend that number the way a well-designed survey lets you. The open-ended format that gives you depth is the same thing that makes the data resistant to the kind of structured analysis that gives stakeholders the numbers they love to put in decks.

So you're not getting the depth of qual or the rigor of quant. Which sounds like a disaster until you understand what you're actually trading for.

Those are not small losses. Anyone telling you this is strictly superior to human-moderated research is selling something, and you should check their LinkedIn to see if they're a founder.

AI-unmoderated conversational research is not trying to replace qual or quant. It's filling a gap that neither could fill: directional depth at the speed a product team actually operates at. It meets the team where they are, on their timeline, without forcing a choice between moving fast and talking to users. When paired with the right complementary methods, most of the shortcomings above stop being structural problems and start being known tradeoffs you design around. How exactly you build that pairing, and what the full architecture looks like, is something I'm working on. More on that soon.

Is it perfect? No. Is it a replacement for everything else? Absolutely not. Is it a legitimate modality with specific use cases where it produces tremendous value when run correctly? Yes. The question is what "run correctly" actually means. And that's where the field has nothing to say yet.

The Real Problem

Here's what nobody in the debate is saying.

Organizations are already running AI-unmoderated conversational research. Right now. Not after the field reaches consensus. Not after the methodology papers get published. Now. Researchers and product teams and ops folks who got tired of waiting six weeks for an answer are running this modality and shipping decisions on it.

Some of them are doing it well. Most of them are not, because there is no framework. There is no routing logic telling them which questions fit this modality and which ones don't. There is no quality standard defining what good looks like. There is no governance model explaining who reviews outputs and when. There is no engagement playbook for presenting findings from this kind of work to a stakeholder who still thinks "only eight people" is a devastating critique.

The debate about what to call it is happening in one room. The actual adoption is happening everywhere else, without the infrastructure it needs.

This is how every new modality enters the field. The practice outruns the framework. People run the work, get useful things out of it, and figure out the structure later. Sometimes much later. Sometimes never. When it's never, you get a decade of inconsistent quality, justified skepticism, and a modality that never reaches its potential because nobody built the scaffolding.

That's the conversation we should be having. Not what to call it. How to use it correctly.

What the Framework Actually Requires

If you take this seriously, you are looking at four things that need to be worked out simultaneously.

Routing. Not every question fits this modality. Some questions need a human in the room. Some need longitudinal depth. Some need the kind of real-time probing that requires an experienced researcher making judgment calls mid-conversation. Running AI-unmoderated research on those questions produces confident-sounding garbage, which is arguably worse than no research at all because it looks like signal.

But some questions fit this modality extremely well. The routing logic is not complicated once you have the right dimensions. Risk: how consequential is the decision this research is meant to inform? Ambiguity: how well understood is the problem space? Expiry: how quickly does the answer need to arrive to actually reach the decision? Low risk, low ambiguity, short expiry is where AI-unmoderated research lives. That is not a small category. It is the majority of the questions product teams are asking every week. The problem is not that the modality has a narrow fit. The problem is that most teams have no routing logic at all. They default to whatever is fastest rather than whatever is appropriate, which is how you end up with AI-unmoderated research on questions that needed a human in the room.

Quality standards. Speed without rigor is noise with a faster turnaround. What does a well-run AI-unmoderated study actually look like? What is the minimum viable structure for the conversation guide? How do you evaluate whether the follow-up logic is producing depth or just length? How do you assess thematic saturation when the data comes back in hours rather than weeks? These are not rhetorical questions. They have answers. The field just has not written them down yet. And critically, quality standards for this modality are not the same as quality standards for human-moderated research. Applying the old framework to a new modality produces either false confidence or reflexive rejection. Neither is useful.

Governance. Who reviews AI-unmoderated research outputs before they ship? What is the standard of evidence required for a finding to influence a product decision? How do you maintain methodological integrity when the work is complete before a review meeting could have been scheduled under the old model? Most teams running this right now have no answer to any of these questions. They have a researcher, a tool, and a deadline. That is not a governance model. Governance is also where the frame comes in. AI-unmoderated research operates within an organizational understanding of users that either exists and is current, or does not and is not. Running fast research inside a degraded frame produces findings that are technically correct and strategically useless. The governance model has to include a check on whether the frame the study is operating within is sound enough to produce reliable answers.

The engagement model. Stakeholders have been trained to treat research as a slow and sacred process. A study takes weeks. The readout is an event. The findings carry authority because they took forever and cost a lot. When you deliver something meaningful in three days, the reaction is often suspicion rather than gratitude. Rebuilding the trust model requires being explicit about what this modality can and cannot do, which questions it answers well, and why the speed is a feature rather than a red flag. That conversation has to be designed. It does not happen by accident. It also requires being explicit about expiry: these findings are valid until the screen changes, the pricing changes, or the decision has been made. Micro research findings are not authoritative forever. Stating that upfront is not a weakness. It is what makes the modality honest.

What Actually Needs to Happen

The field has two options.

It can keep arguing about whether AI-unmoderated conversational data collection is "really" qualitative research. This will generate a lot of LinkedIn engagement, several conference panels, and at least one heated methodological debate that ends without resolution at a happy hour in Seattle (those who know, know). The adoption will continue regardless.

Or it can accept that a new modality exists, acknowledge that it has genuine strengths and genuine weaknesses, and start building the framework that determines when to use it, how to run it well, and how to know when it went wrong.

One of those options moves the field forward. The other one is just decoration on a process that is already happening without us.

The modality doesn't need the field's approval. It's already in production. What it needs is the infrastructure that turns it from a fast and occasionally unreliable shortcut into a legitimate and repeatable research mode.

That infrastructure doesn't exist yet. Building it is the actual work.

🎯 If you want unfiltered writing on where UXR methodology is actually going, subscribe.